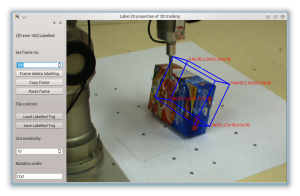

As a part of my project on monocular 3D model-based tracking, I created a GUI tool for labelling poses in video sequences. The tool will interpolate between labelled frames, reducing the work required by the user in labelling the frames.

As a part of my project on monocular 3D model-based tracking, I created a GUI tool for labelling poses in video sequences. The tool will interpolate between labelled frames, reducing the work required by the user in labelling the frames.

The idea is to use this labelled data with image-space error measures, since the eye of the labeller will almost certainly not distinguish the depths of objects with any degree of certainty. This is okay because in the first instance we are interested in human-level performance. This idea is discussed further in sections 3.7 and 7.2 of my thesis.

The labelling tool can be found here:

https://bitbucket.org/damienjadeduff/label_vid_3d

If you make use of this software, please cite our paper:

Duff, D. J., Mörwald, T., Stolkin, R., & Wyatt, J. (2011). Physical simulation for monocular 3D model based tracking. In Proceedings of the IEEE International Conference on Robotics and Automation. Presented at the ICRA, Shanghai, China: IEEE. doi:doi:10.1109/ICRA.2011.5980535 – Preprint available at eprints.bham.ac.uk/978.